The spaces and are algebras under pointwise multiplication: the product of two continuous (or smooth) functions is again continuous (or smooth). Sobolev spaces inherit this property precisely when they embed into , which happens when . The algebra structure connects the embedding theory from the previous chapter to the bandedness of multiplication operators in spectral methods.

Why multiplication matters¶

So far we have used Sobolev spaces as linear function spaces: we add functions, take limits, and apply linear operators. But functions can also be multiplied, and a natural question arises: when is the pointwise product again in the same Sobolev space? The answer turns out to connect the embedding theory we just developed to the structure of multiplication operators in spectral methods.

The algebra property from embeddings¶

An algebra over (or ) is a vector space that also carries a multiplication, a bilinear map that is compatible with the linear structure. You already know several:

| Space | Multiplication | Submultiplicative norm? |

|---|---|---|

| Matrix product | (operator norm) | |

| Pointwise: | ||

| Pointwise a.e. | ||

| Convolution | (Young) |

A Banach algebra is a Banach space whose norm is submultiplicative: . This single inequality says that the multiplication is continuous as a bilinear map, or equivalently, that the space is closed under multiplication with quantitative control.

Not every function space is an algebra. The product of two functions is generally only in (by Cauchy--Schwarz, , but need not be in ). So with pointwise multiplication is not an algebra; multiplication takes you outside the space. The question for Sobolev spaces is: does the extra regularity fix this?

The key observation is that is a Banach algebra (the sup norm is submultiplicative), so any Banach space that embeds continuously into inherits the algebra property. If with , then

whenever the product rule and embedding estimate can be combined. The Sobolev embedding theorem (Theorem 2) gives exactly when . This is the threshold.

Theorem 1 (Sobolev multiplication (Banach algebra property))

Let be open with Lipschitz boundary (or ). If , then is a Banach algebra under pointwise multiplication: there exists a constant such that

In particular, is closed under multiplication.

Proof 1

We prove the case a positive integer; the fractional case requires interpolation. The strategy is to apply the Leibniz rule (product rule for higher derivatives) and then split each term so that one factor is controlled in via the Sobolev embedding.

Step 1: Leibniz rule. For any multi-index , the general Leibniz rule gives

This is the higher-dimensional product rule: it distributes the derivatives between and in all possible ways. (For in one dimension, this is simply .)

Step 2: Estimating each term. Each term in the Leibniz sum has the form where . We estimate in using Hölder’s inequality with exponents 2 and :

Since and , the function has at least remaining derivatives in . When , we can apply the Sobolev embedding to get

(More precisely, and for the terms where we embed directly into ; for the remaining terms we swap the roles of and .)

Step 3: Combining. Summing over all gives

Example 1 ( in one dimension)

For and , the condition becomes , which holds. The proof is completely explicit:

For the first term: . For the second, the product rule gives , so

By the Sobolev embedding , we have and similarly for . Combining:

Each term in the estimate pairs derivatives on one factor with control on the other. The Sobolev embedding converts the norms into norms, closing the estimate.

Remark 1 (Why is sharp)

The threshold cannot be improved. For , the space does not embed into (functions in can have logarithmic singularities), and multiplication fails to be continuous. For instance, in , the function near the origin belongs to but does not.

Multiplication in frequency space: why regularity implies bandedness¶

The Banach algebra property tells us that multiplication by is a bounded operator on . But what does this operator look like when we expand in a spectral basis? The answer reveals a striking structural property: regularity forces approximate bandedness.

To see this most cleanly, work on the torus with Fourier basis . Expand . The multiplication operator has the matrix representation

Observation 1 (Multiplication is convolution in frequency)

The matrix of in the Fourier basis is a Toeplitz matrix: the -entry depends only on , and equals the Fourier coefficient . Multiplying by in physical space is convolution by in frequency space.

Now the connection to regularity becomes immediate. If , then

In particular, the off-diagonal entries of decay:

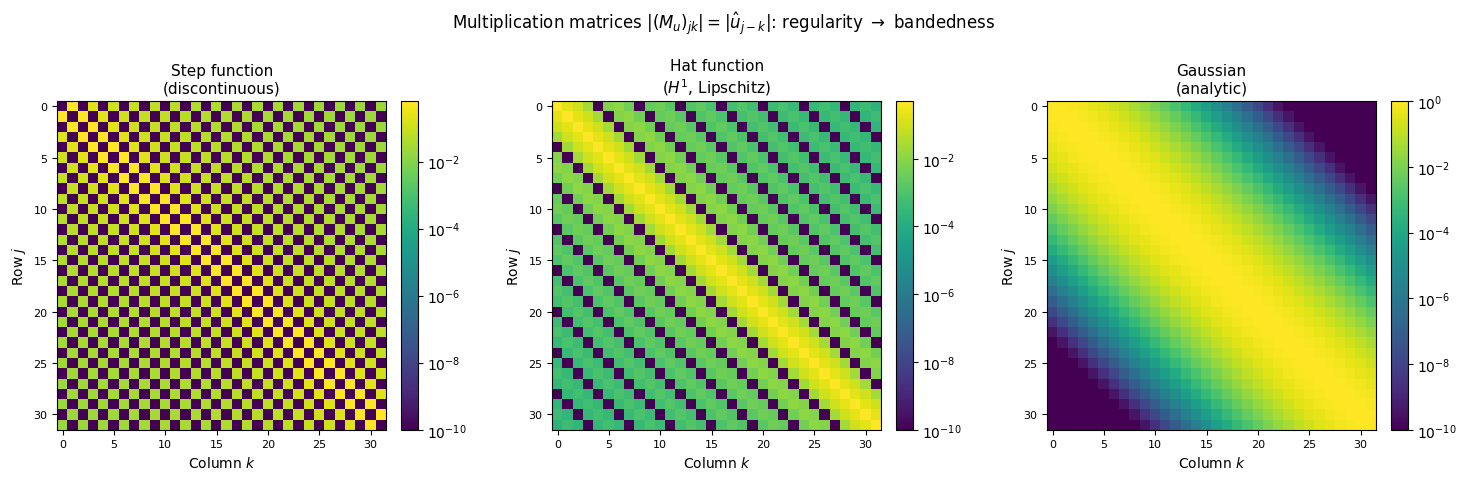

Proposition 1 (Off-diagonal decay of multiplication matrices)

Let with . The multiplication operator in the Fourier basis satisfies

In particular:

: entries decay like , so off-diagonal entries are summable.

: decay like , giving rapid off-diagonal decay.

: superalgebraic decay, meaning the matrix is numerically banded (entries drop below machine precision within a few diagonals).

analytic: exponential off-diagonal decay, making it essentially a banded matrix.

The following picture makes this concrete.

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.colors import LogNorm

fig, axes = plt.subplots(1, 3, figsize=(15, 4.5))

N = 64 # number of Fourier modes

# --- Three functions with increasing regularity ---

k = np.arange(-N//2, N//2)

# 1. Step function (discontinuous, in H^{1/2-ε})

u_hat_step = np.zeros(N, dtype=complex)

for j in range(N):

kj = k[j]

if kj != 0:

u_hat_step[j] = (1 - (-1.0)**kj) / (1j * np.pi * kj)

# 2. Hat function (Lipschitz, in H^1)

u_hat_hat = np.zeros(N, dtype=complex)

for j in range(N):

kj = k[j]

if kj != 0:

u_hat_hat[j] = 2 * (1 - np.cos(np.pi * kj / 2)) / (np.pi * kj)**2

else:

u_hat_hat[j] = 0.5

# 3. Gaussian (analytic)

sigma = 0.3

u_hat_gauss = np.exp(-sigma**2 * k**2 / 2)

u_hat_gauss = u_hat_gauss / np.max(np.abs(u_hat_gauss))

functions = [

(u_hat_step, 'Step function\n(discontinuous)'),

(u_hat_hat, 'Hat function\n($H^1$, Lipschitz)'),

(u_hat_gauss, 'Gaussian\n(analytic)')

]

for ax, (u_hat, title) in zip(axes, functions):

# Build Toeplitz multiplication matrix

M = np.zeros((N, N), dtype=complex)

# Shift to standard ordering for building Toeplitz matrix

u_hat_shifted = np.fft.fftshift(u_hat)

for j in range(N):

for l in range(N):

idx = (j - l) % N

M[j, l] = u_hat_shifted[idx]

absM = np.abs(M)

absM = np.maximum(absM, 1e-16) # floor for log scale

im = ax.imshow(absM[:32, :32], norm=LogNorm(vmin=1e-10, vmax=absM.max()),

cmap='viridis', aspect='equal')

ax.set_title(title, fontsize=11)

ax.set_xlabel('Column $k$', fontsize=10)

ax.set_ylabel('Row $j$', fontsize=10)

ax.tick_params(labelsize=8)

plt.colorbar(im, ax=ax, fraction=0.046, pad=0.04)

plt.suptitle(r'Multiplication matrices $|(M_u)_{jk}| = |\hat{u}_{j-k}|$: regularity $\to$ bandedness',

fontsize=12, y=1.02)

plt.tight_layout()

plt.show()

The multiplication matrix in the Fourier basis for three functions of increasing regularity. Left: a step function (discontinuous) produces a full, slowly-decaying matrix. Center: a hat function () produces algebraic off-diagonal decay. Right: a Gaussian (analytic) produces exponential decay, so the matrix is effectively banded. The color scale is logarithmic.

The intuition: regularity is frequency localization¶

Why should smoothness produce banded multiplication matrices? The intuition comes from three equivalent ways to say the same thing:

Regularity = frequency concentration. A function in has Fourier coefficients decaying like . Higher regularity means the function’s energy is concentrated at low frequencies.

Multiplication = convolution in frequency. The matrix is Toeplitz with entries . Applying to a vector of Fourier coefficients is convolution with the sequence .

Convolving with something narrow produces something narrow. If the sequence is concentrated near (because is smooth), then convolution with it only couples mode to nearby modes , which is exactly the statement that is approximately banded.

Putting these together: smooth functions act locally in frequency space. Multiplying by a smooth function is like a short-range interaction between Fourier modes. Multiplying by a rough function is a long-range interaction that couples all modes to all other modes.

Remark 2 (Analogy with integral operators)

This pattern is familiar from integral operators. A kernel produces a banded matrix in some basis when the kernel is “close to diagonal.” For multiplication, the kernel is , which is as diagonal as possible in physical space. But expanding in the Fourier basis, this perfectly diagonal operator becomes the Toeplitz matrix , which is banded only when is smooth. The same operator can be diagonal in one basis and banded in another; the bandedness in frequency reflects the smoothness in space.

Connection to spectral and collocation methods¶

This structure is exactly what makes spectral methods efficient for smooth problems.

In a pseudospectral (collocation) method, we want to compute the Fourier (or Chebyshev) coefficients of a product . The naive approach is to form and multiply, an operation. But the collocation approach exploits a factorization:

where is the DFT matrix and contains the values of at collocation points. Multiplication is diagonal in physical space, so we:

Transform at collocation points (inverse FFT, ),

Multiply pointwise: (),

Transform back (forward FFT, ).

The total cost is instead of . But here is the subtle point: this procedure is exact only for trigonometric polynomials of degree . For general smooth functions, the product may have Fourier modes up to degree , and the modes above get aliased back into the range.

The Banach algebra property tells us this aliasing is harmless for smooth functions: since , the aliased modes are exponentially small (for analytic ) or at least rapidly decaying (for Sobolev ). The error from aliasing is controlled by the off-diagonal decay of , precisely the entries we truncate by working with a finite matrix.

Remark 3 (Chebyshev vs. Fourier)

For Chebyshev spectral methods, the multiplication matrix is not Toeplitz but almost-banded: the Chebyshev expansion gives a multiplication matrix with entries involving the linearization coefficients of Chebyshev products (), and the matrix has the same off-diagonal decay governed by the rate of decay of the Chebyshev coefficients . The story is identical: regularity of controls the coefficient decay, which controls the bandwidth. This is the algebraic reason why collocation methods produce banded or nearly-banded discrete operators for smooth problems.

Remark 4 (The Banach algebra property as a spectral guarantee)

From the numerical analyst’s perspective, the Banach algebra property of is a stability guarantee for nonlinear spectral methods: if and you form their product spectrally (with or without aliasing), the result stays in with a controlled norm. This is essential for the convergence theory of spectral methods applied to nonlinear PDEs: it ensures that the nonlinearity does not create uncontrolled high-frequency content that would destabilize the computation.